- Home

- Details

- Registry

- RSVP

- Blog

- Hqplayer microrendu remote android

- Hand of god i doser

- Pokemon sun and moon game

- Prince of tennis dubbed episode 1

- 3dkink game review

- Toyota rav4 2004 valve cover gasket

- Megaman x8 pc download full version english

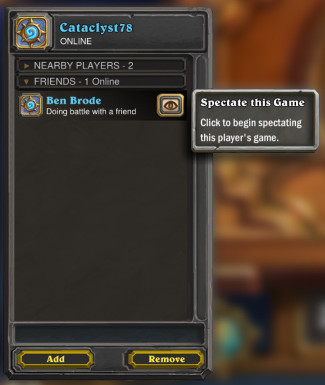

- How to spectate a starcraft 2 game

- Solstheim morrowind

- Phraseexpress download

- Download only fl studio 12-4-2 crack file

- Mestrenova torrent

- Bunker hill security camera needs to b reset

- Login temporarily not permitted protonvpn

For the perception/representation building problem, I can almost guarantee the solution is going to be a ConvNet to process individual frames combined with a recurrent layer to track state over time. We don't have obvious ways to model this part of the problem. AIs might "think" of this problem in different terms than these, but it seems like it still has to do this kind of work if it is going to have a chance to succeed. We think of it in terms of breaking the problem into sub-objectives, considering possible courses of action, decomposing a high level plan into a sequence of directly executable actions, etc. Given high level variables that describe the situation, what's the best course of action? This involves planning. What it's significantly less good at compared to humans is what might formally be called the policy problem. In other words, modern AI is good at perception. ML is really good at taking high dimensional input with lots of noise and figuring out to map that to meaningful (to it, if not to us) high-level variables. I think it's much more likely to be the other way around. Presumably the ML can then handle figuring out the optimal action to take in response to those variables. You seem to be supposing that a human expert is going to be carefully designing a set of variables to track, and in doing so conveying what features of the input to pay attention to and what can be ignored. This is the key contribution of deep learning. Developing good representations of state is precisely what today's machine learning is so good at. I agreed with everything you said until here.

#How to spectate a starcraft 2 game how to#

I think a big component is not really machine learning but more related to how to represent state at any given time, which will necessarily involve a lot of human-tweaking of distilling down what really are the important things that influence winning. I do think that eventually we will get an AI that can beat humans, but it will be a non-trivial problem to solve, and it may take some time to get there. Starcraft is much more open-ended, has many more rules, and as a result its much harder to build a representation of game state that is conducive to effective deep learning. Not to mention that the game itself is more complex, in the sense that go, despite being a very hard game for humans to master, is composed of a few very simple and well defined rules. This alone makes it a much harder problem than go.

Starcraft is a continuous game and the game state is not 100% known at any given time.

Go is a discrete game where the game state is 100% known at all times. There are several incorrect comments saying that in SC1 AIs have already been able to beat professionals - right now they are nowhere near that level. A lot of people here seem to be underestimating the difficulty of this problem.

- Home

- Details

- Registry

- RSVP

- Blog

- Hqplayer microrendu remote android

- Hand of god i doser

- Pokemon sun and moon game

- Prince of tennis dubbed episode 1

- 3dkink game review

- Toyota rav4 2004 valve cover gasket

- Megaman x8 pc download full version english

- How to spectate a starcraft 2 game

- Solstheim morrowind

- Phraseexpress download

- Download only fl studio 12-4-2 crack file

- Mestrenova torrent

- Bunker hill security camera needs to b reset

- Login temporarily not permitted protonvpn